A tale of a hot dog stand, the Soviets and the need for ‘why.’

This article originally appeared in Quirks Media.

This article originally appeared in Quirks Media.

When we at Alpha-Diver were first thinking about how to design a big study drawing on passive data, a story came to mind that I had heard back when I lived in Washington, D.C. It’s a Cold War-era tale, maybe just an urban legend that tour guides tell visiting busloads of high school students every summer, but I still think it holds an important lesson for us as researchers as we approach the growing trend of using passively collected data in our work and what it can and can’t do for us. A cautionary tale, if you will.

There was a small building in the center courtyard of the Pentagon back in the day. (There’s still a building there but this particular one no longer stands.) As the story goes, the Soviet Union had missiles – nuclear ones, no less – constantly trained on this building. They were convinced it was the entrance to an underground bunker with a top-secret meeting room, with the whole Pentagon essentially acting as a fortress around it.

Why did they think this? Well, the Soviets had their own version of passively collected data: satellite imagery. Every day, their satellites would pass over and take pictures of the site and every day they would observe a pattern of behavior in those images: there were always military officers going in and out of this building. From this data, they concluded that clearly the building must be important. After all, it was smack in the center of the Pentagon. This information didn’t come from a human spy with an imperfect memory reporting what he or she recalled seeing, this was objectively what was happening.

Here’s the thing: that building? It was a hot dog stand. There were always people milling around it because when the satellites passed over at the same time every day, it was most likely lunchtime.

I tell you this story because an emerging theme we hear more and more from clients is a desire to get away from “traditional surveys” that ask consumers to recall and report/explain their behavior. There is a push to derive consumer insights purely from the growing suite of passively collected consumer information – such as scanned receipts, loyalty card data, digital activity tracking, etc. – that a plethora of data providers now offer.

Some clients firmly believe that passively collected data are a better way to reveal true, actionable insights about consumers’ behavior, because they don’t rely on asking them anything or on their faulty ability to remember. We’ve heard from clients who want to rely solely, or as much as possible, on passive data, believing that any datapoint obtained by asking a respondent can’t be trusted. They posit that the passive data is the “truth” and that if you just look at enough of it, you’ll find patterns that explain behaviors, and by extension find the keys to encouraging more of the behaviors that you want. At our firm, we’ve been cautioning against the sole reliance on passive data, because we continue to prove that direct interaction with a respondent is still valuable in unlocking the why behind their behavior, IF you do it right.

For me, the hot dog stand tale illuminates two big pitfalls of relying solely on passive data:

- It can show you what consumers are doing but it can’t tell you why. We can see which websites consumers go to, or look at what they put in their shopping carts, but from passive data alone we can only guess at why they surf Facebook during their workday, for example, and what that says about their likelihood of responding to the ads they see in their feed (if they notice them at all).

- The behaviors you can passively observe are not the only behaviors that matter. The Soviets likely attributed outsized importance to that building because it was out in the open, in full view. Similarly, with passive consumer data, we can only analyze what we can measure. We are far from being able to observe everything that a consumer does, sees, hears or smells that might lead them toward a behavior. No one passive data company can offer that complete of a picture. (And quite frankly, I’m not sure I want to live in a world where one can.)

This is why we believe that even with the many benefits of passive data, you can’t get to the why of observed behaviors without some active interaction with the people being observed. Now, we do agree completely that simply asking them why they bought that product on Amazon or clicked on that Facebook ad is fruitless. But the idea that we can’t get them to reveal insights into what drives their behavior via a survey instrument is false. We do it every day.

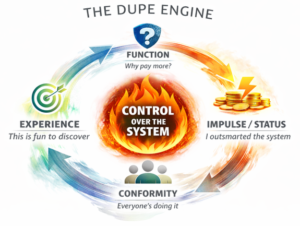

When done well, behavioral science-backed research leverages neurocognitive and neuropsychological “tells” to identify the intrinsic motivations and costs expressed by individuals and how they are impacted by specific contexts of interests (e.g., an online shopping journey). While you’re not likely to get good data by asking people why they do what they do, you can get them reveal the whys they don’t even consciously recognize themselves – but only if you ask the right questions.

This revealed knowledge can unlock an otherwise unattainable level of fidelity in our understanding of consumers’ attention, thoughts, experiences, decision-making and actions. And yes, it can also help make sense of a myriad of behaviors observed in passively collected data, so we can separate the truly important buildings from the hot dog stands.